AI Governance Framework

Our Ethos

We believe that sign languages are full, natural languages that deserve the same equality, recognition, respect and protection as spoken languages. Sign language users face barriers across society whether engaging in the public

sphere or on digital platforms. There is a global shortage of sign interpreters and translators, dissemination of public information in sign language is inconsistent, and many digital and institutional services remain inaccessible to Deaf individuals. In parallel, AI technologies are becoming powerful enablers of language translation,

generation, and accessibility

In a world where AI is rapidly reshaping communication, we see a unique opportunity to harness AI’s capabilities to increase accessibility and inclusion for Deaf communities but only if AI is developed ethically, with and by sign-language users, and in ways that preserve linguistic and cultural integrity.

Our Core Beliefs & Principles

1. Sign Languages Are Legitimate, Rich, and Protected Languages. We recognise sign languages as full languages in their own right with grammar, differences within and between signed languages alongside cultural significance deserving the same respect and protection as spoken lI anguages. The linguistic rights of sign-language users must be upheld.

2. AI as Inclusion, Not Substitution. AI should be used to enlarge access and inclusion, not to replace trained human interpreters, especially in sensitive or high-stakes contexts such as legal, medical or child protection settings.

When developed responsibly, AI sign-language solutions can unlock access to education, public information, digital spaces, employment and civic participation, creating more inclusive societies.

3. Co-Creation with Deaf Communities. AI systems must be built with and by sign-language users. Deaf people must be meaningfully involved in governance, design, data collection, testing and deployment. Without their

involvement, AI risks misrepresenting sign languages and reinforcing inequalities.

4. Ethical Use of Data including consent, privacy, ownership and reward. Use of captured sign-language must respect privacy, informed consent, data ownership, fair recompense and transparent terms. Signers should retain agency over how any digital likeness is used.

5. Preserve Linguistic & Cultural Integrity. Sign language AI must support, not erode, the linguistic and grammatical richness inherent in signing. AI must avoid reducing sign languages to oversimplified or flattened representations

whilst also keeping up with neologisms and changes in how the language is used. We will aim to preserve and deploy regional signing variations (dialects) where technically feasible and wherever possible.

6. Transparency, Accountability & Human Oversight. Users must know when AI is used, why, and how. Signapse processes and systems should allow review, correction and appeal. Human oversight must remain available with policies including content moderation, risk assessment of use cases and quality control should all be applied consistently.

7. Inclusivity & AI. AI sign language technology must be developed with attention to equity, avoiding bias, exclusion, or erasure. Datasets, training and evaluation should represent a broad diversity of signers and regional variations meaning AI doesn’t entrench existing inequalities.

8. Using AI to Expand Access and Inclusion.

Our Vision: Using AI, Signapse translates all the world’s words into sign language. We believe in a future where:

- Public services, education, transport, civic information and digital platforms are fully accessible via sign language co-created by AI and humans together.

- Deaf and signing individuals have equal access to opportunities: education, employment and participation that bridges long-standing communication gaps.

- Sign languages are preserved, respected and thrive culturally including dialects, regional variation and community identity.

- AI aids social inclusion and linguistic justice, rather than reinforcing marginalisation or technological dependency.

Our goal is not simply to build a “translator” but to build a toolset that expands autonomy, inclusion, dignity and opportunity for signing communities worldwide.

---------------------------------------------------------------------------------------------------------------

Content Moderation Policy

1. Purpose & Scope

1.1. This policy sets out how Signapse moderates content that passes through our services, especially video translations into Sign-Language and associated user-uploads or client-provided media.

1.2. Our mission is to make communication accessible, safe and inclusive. To support the safety aspect of this, we commit to preventing the creation, translatio or distribution of unlawful, harmful or discriminatory content.

1.3. This policy applies to all content processed by Signapse: initial client uploads, AI-translated Sign-Language videos, final outputs, and any user-reporting processes.

1.4. The moderation process covers both pre-translation screening an post-translation review to ensure safe, compliant, high-quality content.

1.5. The policy ensures alignment with our Translation Quality Assurance and Use Case policy, Ethos and regulatory obligations. It specifically refers to the quality of our translations rather than any other workflow or KPI.

2. Principles

2.1 Legality. We will not allow content that breaks UK / EU / international law (e.g.,hate speech under the UK’s legislative regime, illegal pornography, incitement to violence).

2.2 Safety & inclusion. We recognise many of our users are from communities using Sign-Language and may be marginalised. The content we support must respect dignity, avoid discrimination, and protect vulnerable individuals.

2.3 Transparency & accountability. We explain our rules clearly (client user-guidelines accessible in plain English and Sign-Language summary), report moderation outcomes periodically, and provide avenues for appeal or review.

2.4 Proportionality. As a small team, we apply moderation in a risk-aware way: fully automated screening of lower-risk content, human review for higher-risk. We

recognise we cannot match large platforms overnight, but we commit to steadily improving.

2.5 Human + AI oversight. Where possible and appropriate we will deploy automated tooling (scripts, filters) to flag potential violations, and human moderators to review flagged content.

2.6 Accessibility & user empowerment. Our moderation guidelines and user-reporting pathways will be accessible (plain language text + Sign-Language summary), so all users understand expectations and rights.

3. Prohibited & Restricted Content

3.1 The following content is strictly prohibited. Any occurrence will typically result in removal or refusal of translation/output, and possible suspension of service:

- Violent extremism, organised hate-groups, hate speech targeting protected characteristics (e.g., race, religion,disability, Sign-Language identity).

- Child sexual abuse or exploitation material (CSAM).

- Pornography or explicit adult sexual content intended for arousal.

- Incitement to violence, self-harm or serious illegal acts.

- Impersonation of authorities (e.g., law enforcement) or fraud, phishing, financial scams.

- Extremist propaganda is explicitly banned. Propaganda is “the dissemination of information: facts, arguments, rumours, half‐truths, or lies—to influence public opinion.

3.2 The following content is restricted i.e. may be allowed under defined circumstances (after extra review), but only if appropriate for context, audience and with safeguards:

- Medical/health advice, legal advice, early years education or financial advice allowed only if provided by qualified professionals and clearly labelled.

- Political content, adult themes, or sensitive social issues allowed only where relevant and compliant (with additional scrutiny).

For live streaming, the customer is responsible for ensuring compliance with point 3.2 and clearly signs and agrees to this.

4. Operational Framework & Workflow

4.1 Content Flow & Screening

- Translated output (into Sign-Language) may also be subject to automated + human check, because translation can introduce new risks (e.g., mis-interpretation, context change). We mitigate these risks by applying our QC processes, separately from content moderation.

- Client uploads source video → initial automated screening (for obvious violations using keyword/metadata filters) → if no flag, proceed to translation → if flagged or high risk, human moderator reviews before publishing.

4.2 Flagging & User-Reporting

- Clients or end-users can report problematic content via support@signapse.com (or a dedicated form).

- Reports trigger human review by Customer Success Team within a defined SLA of 48 hours4.3 Escalation Path

- Level 3: CEO/CTO signs off on final decisions for major incidents (e.g., service suspension, regulatory notification).

- Level 2: Senior review by Head of Customer Success for complex/higher-risk cases (e.g., possible hate-speech, legal risk).

- Level 1: Moderator handles initial review and recommends action (approve, edit, remove).

4.4 Appeals Process

- If a client or user disagrees with moderation action, they may appeal. We commit to reviewing appeals in a timely manner (target: within 5 business days).

- The appeals reviewer will not be the same moderator who made the initial decision (ensuring impartiality).

4.5 Transparency & Reporting

5. Roles, Responsibilities & Metrics

4.6 Data Protection & Privacy

All moderation actions comply with our data protection policy;minimal personal data, secure handling of flagged content andretention only as needed for review.

4.7 Training & Review

- Staff involved in moderation receive training at least annually on policy accessibility implications for Sign-Language users.

- The moderation policy and workflow will be reviewed every 6 months (or sooner if regulatory change or incident) to reflect technology updates and scale needs.

5.1 Roles

6. Final Notes & Review

- CEO: owns this policy, approves major escalations, ensures resource allocation.

- CTO : responsible for automation tools, oversight of human review process, tracks false-positive/negative rates.

- Head Customer Success manages user-reporting, first-line moderation, metrics collection, user-training on guidelines.

- 6.1 Even though Signapse is a small company, we take moderation seriously. A single serious incident could undermine trust with our customers and damage the brand.

- 6.3 This policy will be published on our website in plain English and supplemented with a Sign-Language summary video for accessibility.

- 6.4 Review date: [six months from publication].

- 6.5 Versioning: Any material changes will result in a new version (e.g., Version 1.1) and clients will be notified.

7. Supported by BDA Discussion Paper

Sign off:

Date: 09/02/2026

Sally Chalk, CEO, Signapse Ltd.

---------------------------------------------------------------------------------------------------------------

AI & Human Translation Use Policy

1. Purpose & Scope

1.1. This policy sets out when Signapse recommends AI-generated sign-language translation and when we recommend qualified sign-language translators (one way) or interpreters, across key sectors.

1.2. The purpose is to ensure our AI is deployed safely, appropriately and inclusively, protecting Deaf sign-language users and supporting fair access to information.

- ● Quarterly internal report: number of uploads, number of flagged

items, number of removals/edits, average response time, number of

appeals and outcomes.

4.6 Data Protection & Privacy

● All moderation actions comply with our data protection policy;

minimal personal data, secure handling of flagged content and

retention only as needed for review. - Quarterly internal report: number of uploads, number of flagged items, number of removals/edits, average response time, number of appeals and outcomes

1.3. This policy applies to all Signapse products (SignStudio, SignStream, plugins, APIs) and guides customers, partners, and internal teams on appropriate use.

1.4. It defines guardrail zones. We have guardrails around certain kinds of high‑risk work. These are not walls; they’re frames to guide safe innovation.

1.5. This policy recognises sign languages as full natural languages and communities that use sign languages as their primary language as rights-holders. AI-generated sign-language translation must respect linguistic integrity, cultural identity, consent, and human dignity, in line with Deaf-led policy frameworks.

1.6. This policy aligns with:

- Signapse’s Content Moderation, Quality Control Policies and Ethos.

- BDA AI & BSL discussion document

- Sorenson AI-for-Good sector use-case

- EUD position paper on AI and Sign Language

- EU AI Act.

- AI Interpreting Solutions Evaluation Toolkit - SAFE AI

2. Principles

2.1 Safety first. AI may only be used where it is safe, accurate enough for the context, and does not create risk of harm or miscommunication

2.2 Human first for high-stakes content. Where decisions affect health, liberty, legal rights, safeguarding, or high-risk operations, a qualified interpreter/translator is required. In most cases, we will recommend another company.

2.3 Transparency & accountability. We explain our rules clearly (client user-guidelines accessible in plain English and sign‐language summary), report moderation outcomes periodically, and provide avenues for appeal or review.

2.3 AI for scale and speed. For routine, repeatable, non-critical content, AI translation may be used to increase accessibility quickly and affordably.

2.4 Context and audience matter. Deaf sign-language users have unique linguistic needs; we must consider grammar, clarity, cognitive load, and cultural appropriateness.

2.5 Clarity. We will clearly state where AI is recommended, where our guardrail zones are, and how we escalate content that is borderline.

2.6 Human + AI oversight. In line with our content moderation policy, all AI deployments include fallback rules, monitoring and human escalation pathways.

- 6.2 We commit to being realistic: our moderation process will scale as we grow but we will abide by these core principles from day-one.

2.7. Choice. We support informed choice by stakeholders and principal communicators. Where possible, AI use should be transparent, optional and complemented by access to human interpretation in high-risk contexts. We see our role as a technology provider to work with stakeholder communities to ensure that they have choice in the services they receive and how our technology is used.

2.8. Consent and representation: AI-generated sign-language output must not imply endorsement, identity, or authorship by any individual signer. Where recorded human signers are used, informed consent and appropriate compensation must apply (see Signapse Reward & Remuneration Policy).

3. Sector Definitions

(Used throughout this policy to classify content risk

3.1 Transport The movement of people from one location to another by road, rail, air, water or a mixture. This includes associated infrastructure, scheduling, passenger announcements, onboard services, customer information, safety briefings, journey updates, transit-centre communications, and mobility-services.

3.2 Community

General public information, charity notices, cultural content, community-group updates, government informational messaging, social-care updates, and local services.

3.3 Education

Learning content, school/college/university communications, IEPs, safeguarding, curriculum materials, assessment guidance, homework materials, teacher–student–parent interactions.

3.4 Employment

Workplace communication including HR updates, training, safety briefings, internal meetings, performance discussions, management announcements, and customer-facing service content.

3.5 Events and Entertainment

Live or recorded gatherings, performances or presentations designed for public or private audiences, including but not limited to conferences, festivals, concerts, theatre productions, award ceremonies, product launches, sports entertainment, virtual-/hybrid-event broadcasts, streaming content, Video on Demand and cultural/arts programmes.

3.6 Health

Any communication involving diagnosis, treatment plans, test results, symptoms, medication, safeguarding, mental health, risks to life, NHS guidance, or patient-clinician interaction.

3.7 Legal

Any communication that affects legal status, rights, obligations or risks: contracts, tenancy, employment disputes, immigration, consent forms, police or judicial interaction, disciplinary action.

3.8. Safeguarding.

Children at risk of harm or in need, vulnerable adults - mental health, learning disabilities, sensory disorders, physical disabilities, substance abuse. Anyone that a statutory authority would deem at risk or in need.

4. Recommended Use: Human and AI Translation

This section and matrix follows the same structure as the other sector models and the reference

4.1. Transport.

Use a qualified translator/interpreter for:

- Live, two-way interactions with Deaf passengers

- Safeguarding situations involving vulnerable passengers

- Complaints, enforcement, or dispute resolution

AI-generated translation may be used for:

- Routine service announcements and journey updates

- Pre-recorded or scripted passenger information

- Wayfinding, accessibility, and travel guidance content

- Transport websites, apps, and digital displays

4.2 Community

Use a qualified translator/interpreter for:

- Sensitive community issues

- Safeguarding or vulnerable group communication

- Emergency instructions involving risk to life.

AI-generated translation may be used for:

- Council announcements

- Local service updates

- Cultural content

- General public-information campaigns

- non-urgent community news

- Website content

4.3 Employment

Use a qualified translator/interpreter for:

- Contract negotiation

- Grievances, HR disputes

- Performance management

- High-stakes employee conversations

- Communication with Deaf colleagues using sign language in sensitive contexts

AI-generated translation may be used for:

- Routine team communications

- Day-to-day business updates

- Internal training

- Standard operating procedures

- Safety notices once validated

4.4 Education

Use a qualified translator/interpreter for:

- Serious disciplinary meetings

- Individual Education Plan (IEP) discussions

- Safeguarding conversations

- High-stakes teacher–parent meetings

- Complex academic content requiring deep nuance

- AI must not be used for assessment, grading, or exam content without explicit regulatory approval.

AI-generated translation may be used for:

- pre-recorded curriculum videos, depending on quality score and guided by feedback from end users

- supplementary, non-assessed classroom materials that do not replace live teaching or interpretation

- Routine announcements and general orientation content

- Parent newsletters and non-critical updates

- Website content

4.5 Health

Use a qualified translator/interpreter for:

- any live, interactive health communication

- patient consultations or examinations

- diagnosis, treatment discussions, care planning

- safeguarding or risk-of-harm conversations

- mental-health consultations

- communication with Deaf patients using sign language

AI-generated sign-language translation may be used for:

- General, non-personalised, public health information

- Preventive-care and health promotion campaign

- Administrative and logistical communication

- Pre-recorded, non-clinical general-audience content

- Appointment reminders or non-consequential updates

- Non - consequential website content

Community sensitivity note (Health):

Signapse recognises that AI use in health-related contexts is particularly sensitive for Deaf communities due to historical barriers to access and the risk of perceived substitution for live interpretation. Even where content is informational and non-clinical, additional care must be taken in framing, labelling, and deployment.

4.6 Events and Entertainment

Use a qualified translator/interpreter for:

- Emotional presentations

- Speaker–audience interaction

- Deaf participants needing live interaction

AI-generated translation may be used for:

- Opening/closing announcements

- General orientation

- Public information displays

- Pre-scripted segments

- Q&A summaries

4.7 Legal

Use a qualified interpreter for:

- Any live interactive legal communication

- Legal advice, rights, obligations

- Contracts or agreements

- Disciplinary processes

- Police, court, or immigration communication

- Anything that may cause loss of liberty or financial consequences

AI-generated translation may be used for:

- General guidance videos

- Basic “how-to” explainers

- Non-binding informational content

- Public awareness campaigns -with care

4.8. Safeguarding.

Always humans

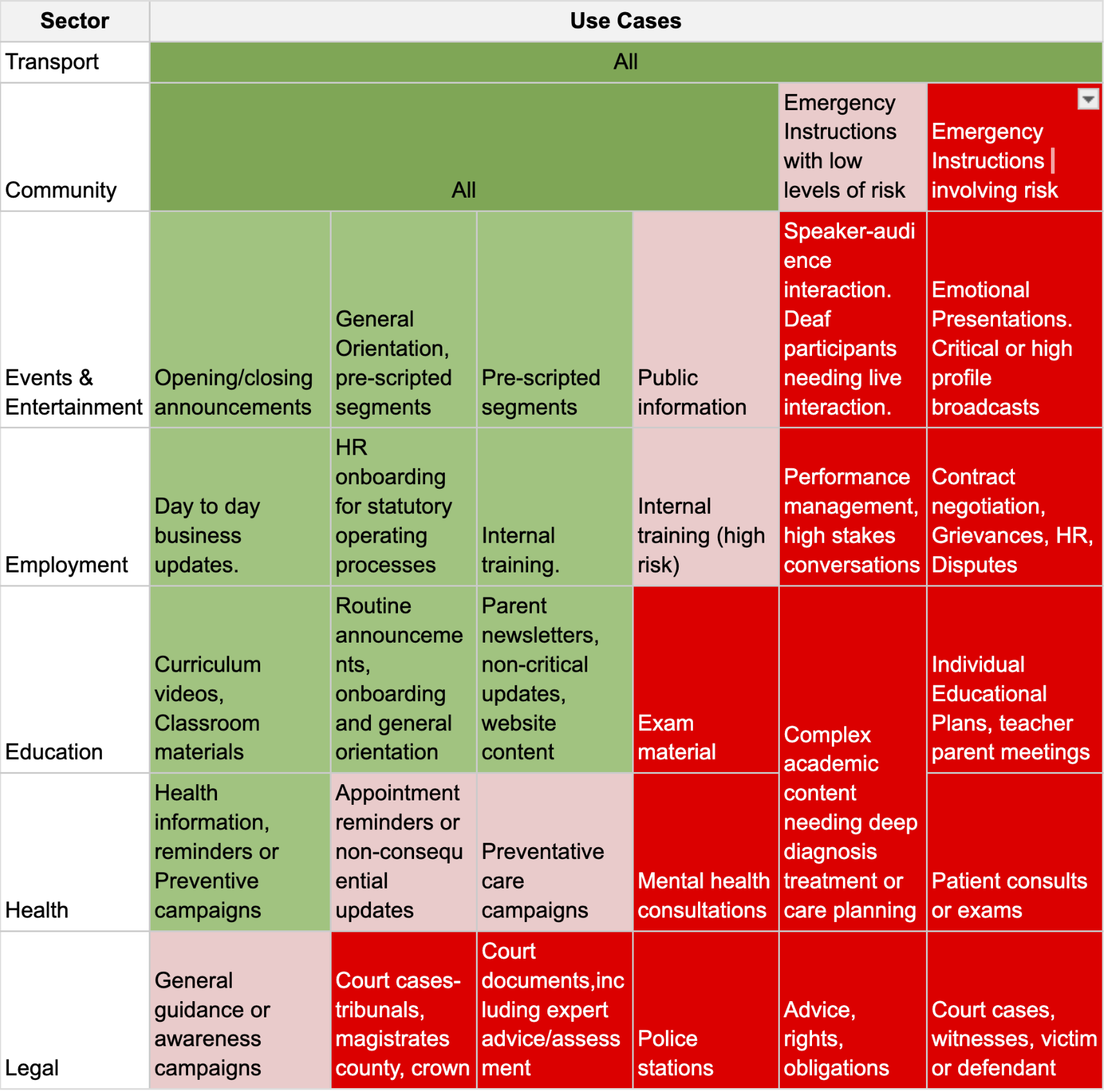

5. Guidance Matrix for guardrailed content.

Please bear in mind the Quality of AI translation, speed required (e.g. live translation v 5 day turnaround) and risk of the use case e.g. e.g. crown court v routine transport information at Kings Cross Train Station.

For AI products we will explicitly make sure that customers agree to our T&Cs and educate customers through the sales and onboarding process. Where we have control, we will label AI generated content for users (e.g. an on-screen icon) As with any technology solution there needs to be a legal and procedural protection. We will capture the customers’ industry on signup. and prompt them with a warning if we notice they're using Instant AI and they are using it in a red-flagged domain.

6. Roles & Responsibilities

- CEO (Policy Owner): Sets risk thresholds; approves sectors where AI can or cannot be deployed.

- CTO: Ensures accuracy thresholds, model guardrails, and safety behaviours.

- Head of Commercial: Manages customer onboarding, educates clients on this matrix, handles escalation.

- Moderation Team: Reviews borderline or guardrailed content before translation; flags inappropriate use.

Deaf Advisory Board: Provides oversight on community impact and cultural/linguistic accuracy alongside our Q and A policy.

6. Escalation Path

If content sits between categories or poses potential harm:

Level 1 – Customer Support

Reviews the use-case and checks the sector category. Within 48 hours.

Level 2 – Moderation Panel

Evaluates technical capability (accuracy, turnaround, safeguards). Within 3 working days..

Level 3 – CEO

Final sign-off for high-risk deployments (legal, medical, safeguarding). Within 5 working days.

7. Alignment with Content Moderation Policy

This Use-Case Policy inherits the following rules from the Moderation Policy:

- Prohibited content cannot be translated or published.

- Restricted categories (health/legal/financial advice) must follow all safeguards.

- User reports or concerns about inappropriate AI use follow the same reporting channel:

support@signapse.ai - Appeals follow the same 5-day SLA.

- Quarterly reporting includes AI-use categories and interpreter-vs-AI decisions.

8. Review & Versioning

8.1 The policy is reviewed every 6 months alongside the Content Moderation Policy.8.2 Major changes create a new version (e.g., v1.1).8.3 A sign-language video summary will accompany this policy for accessibility.

Sign-off

Sally Chalk,

CEO, Signapse Ltd.

Date: 04/03/26

---------------------------------------------------------------------------------------------------------------

Signapse Translation Quality Assurance Policy

1. Purpose & Scope

This policy sets out how Signapse ensures the quality of our AI-generated Sign-Language translation services across all products, platforms and use cases. It covers every translation output we deliver, whether client-facing or embedded in third-party services.

The policy ensures alignment with our Content Moderation and Use Case policy, Ethos and regulatory obligations. It specifically refers to the quality of our translations rather than any other workflow or KPI.

2. Principles

These principles are what we follow at Signapse and are based on developing guidance across AI and automatic localisation in general and Sign-Language in particular.

Accuracy: Translations must faithfully and clearly convey the original meaning, structure and intent of the source material.

- Clarity & Usability: Outputs must meet the needs of Deaf Sign-Language users and other stakeholders, using appropriate language, grammar and non-manual features.

- Relevance: We aim for style and tone to remain relevant across registers (relaxed v formal, community v business)

- Consistency: Language and Terminology should remain consistent across projects, platforms and versions.

- Risk-awareness & Suitability: We adopt strict guardrails, QA and human oversight where the risks are higher (legal, safeguarding and health). Please see our Use Case policy for more information.

- Continuous Improvement: We collect feedback, measure performance and evolve our processes and tools. We are working towards AI QC tools that may include language detection and verification.

- Transparency: We clearly state when a translation is AI-generated.

3. Statutory & Regulatory Frameworks

- Artificial Intelligence Act (EU) for AI systems (transparency, human oversight, documentation).

- Accessibility obligations e.g., UK Equality Act 2010, accessible information standards, European Accessibility Act 2025.

- Data governance, privacy and security standards (GDPR, UK Data Protection Act) for translation workflows and user data.

4. Application of our quality processes

4.1. Internal Workflow.

This applies to SignStudio QC AI.

1. Intake & classification: Identify source content, target audience (Sign-Language users, spoken language users), sector (health, legal, etc) and risk level.

2. Method determination: Based on classification and our Use Case Policy, determine whether AI-generated translation is appropriate.

3. Translation / Generation: Run AI model (if applicable) and assign human QC if appropriate.

4. Quality-Check / Review: Apply QA checklists: linguistic, functional, cultural, legal dimensions. Confirm accuracy, clarity, usability.

5. Sign-off & Delivery: Embed metadata/labels as AI-generated, deliver to client/user.

6. Feedback & Monitoring: Collect user feedback, record performance metrics, log incidents/fallbacks, and escalate if required.

7. Continuous improvement: Review metrics & feedback, feed into roadmap updates, update workflows, update models, update human training/guidance

● We maintain a translation workflow guide, glossary/style guide for Sign-Language users, standard operating procedures (SOPs) and QA checklists.

- Each translation that goes through the AI QC process is reviewed by our internal team. If the AI model produces an incorrect sign for a gloss, or if a required gloss is missing, this is flagged to our research team to update or

record the correct gloss. We aim to remove the manual steps in this process and make feedback faster, more streamlined, and automatic, enabling the team to evolve and improve our models more quickly.

Both Instant AI and AI QC processes go through a review process. AI QC is reviewed during the delivery process and Instant AI is reviewed post delivery. All findings from the review process are used to enhance and improve the models and quality of translation. The review process is as follows:

Internal Our Sign-Language Production Framework (SLPF) tiers and roadmap stages guide

which sectors should be guard-railed. - Performance is measured on the following criteria:

- SignStream 5-Star System

- Deaf staff review against our scoring. Aware of limitations e.g. “marking our own homework” and “getting used to systems”

- Deaf Advisory Board.

- We have a clear and simple feedback mechanism in the SignStream web plug in.

External

- Monthly User-group meetings. We take translations generated instantlyusing SignStream and share these videos with our Deaf user groups to understand how we can improve both video quality and translation accuracy. For example, during the BA POC, we brought the gate announcement recordings to a user-testing group to gather detailed feedback and identify key improvement points.

- Consultation with Deaf organisations, e.g. RAD, Deafness Support Network BDA, Action Deafness.

- 100 Deaf people project

- Bespoke projects for trials and POCs, e.g. British Airways, Doncaster DeafClub for LNER, Jewish Deaf Association for SWR.

- When working with clients we will often support them to develop their own rubrics and QA frameworks as required, e.g. Amazon Prime’s quality rubric.

5. Roles

- CEO (Sally Chalk): Policy owner; oversees overall QA translation strategy, allocates resources, approves KPIs.

- CS (Ben Saunders): Responsible for QA processes and research tools to improve and move towards automation. AI model quality.

- CTO (Alex Le Peltier): Responsible for translation technology stack, AI model quality, data governance, and monitoring performance.

- Head of Quality / QA Manager (Rachel Benyon): Owns QA checklists, review workflows, sign-Language specialist coordination, and monthly reporting.

- CRO (Will Berry): Manages client/user feedback, coordinates corrective actions, liaises with the Product, Engineering and Research Team.

- Human Translators / Sign-Language Specialists

- All Staff / Partners: Responsible for following workflows, flagging quality issues, and supporting continuous improvement.

6. Metrics

- SignStream 5-Star System: Average monthly ratings are published any rating below this threshold triggers review within 5 working days.

- AI Translation Accuracy: Daily/weekly measurement of understandability and correctness (e.g., target 60% Stage 1, 80% Stage 2, 98% Stage 3 perroadmap).

- Turnaround Time: Instant AI is 30 seconds, QC AI is to be confirmed.

- Error Rate: Defined by severity (omissions, mistranslations, misinterpreted sign grammar) – benchmark and aim for continuous reduction.

- User Satisfaction: User Satisfaction: Achieve ≥90% satisfaction for translated content by March 2026

We will measure this by ensuring all both Instant AI and QC support in-product feedback, and by having Customer Success and Product work closely with our Deaf Impact Officer to run regular user-feedback sessions with both new and existing clients. These insights will guide continuous improvement across our AI models and product experience.

Escalation & Fallbacks: Number of fallback events (AI→human) and incident logs

monthly; aim to decrease use of fallback in defined use cases.

7. Review

- This QA Translation Policy will be reviewed at a minimum every 6 months, or sooner if a significant change (technology, regulation, market) occurs.

- Quarterly internal audits will evaluate adherence to workflow, QA metrics and Sign-Language specialist input.

- Annual large-scale external review by the Deaf Community to ensure cultural/linguistic integrity and suitability.

- Updates will be versioned (e.g., v1.1, v1.2) and communicated to all staff, contractors and partners; available on the company intranet and toclients on request.

Sign‑off

Sally Chalk

CEO, Signapse Ltd.

Date: 09/02/ 2026